A previous post talked about my need for some local, reliable storage in my home. That project led to investigating some other options. Since I’m a big fan of Amazon S3, it seemed like something I should involve in my storage solution. The Elastician (Mitch Garnaat) and I bought the same hardware and are working through the setup together. Here’s the rundown of the hardware including costs;

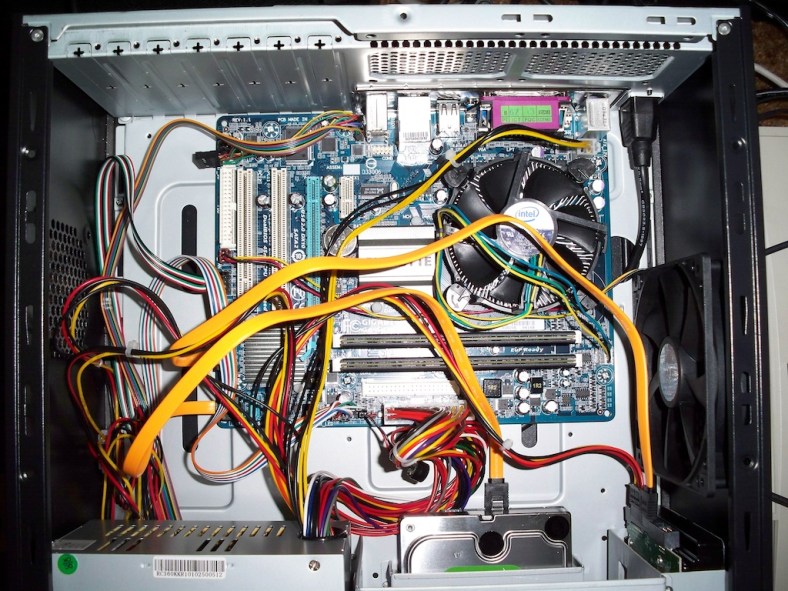

My previous post discusses the hardware in more detail and some of the choices. Here’s a picture of inside of the case once things were assembled. The observant among you would notice that one of the drives doesn’t have power. That’s because the case power supply didn’t have 2 SATA power connectors and the adapter cable was on order when this picture was taken. I’ll also point out that this case isn’t ideal for mounting several 3.5″ drives. With adapters, I can fit 4 in there, true. However, shopping around for something more to my liking is something I’d do differently next time.  Thinking more about the software to run on the NAS has led to several projects including FreeNAS and OpenFiler. We decided to go with something we’re familiar with, Ubuntu. Ubuntu has instructions on their download page for creating a bootable flash drive. I tried the Mac OS-X method and failed, so I resorted the tool from pendrivelinux.com on the family window box. The Universal USB Installer they have works well and created good, bootable flash drives every time.

Thinking more about the software to run on the NAS has led to several projects including FreeNAS and OpenFiler. We decided to go with something we’re familiar with, Ubuntu. Ubuntu has instructions on their download page for creating a bootable flash drive. I tried the Mac OS-X method and failed, so I resorted the tool from pendrivelinux.com on the family window box. The Universal USB Installer they have works well and created good, bootable flash drives every time.

Creating a Bootable Flash Drive

I tried the Ubuntu Server download, but that seems to be geared towards jumpstarting a server install vs running right off the flash drive. The Ubuntu Desktop was much more to my liking.

To get things going, I needed to connect a mouse/keyboard/monitor. Once I configured the BIOS to boot from the USB HDD, it recognized the bootable flash drive and started bring Ubuntu up. It seems to take “forever” to boot up. I could hit “escape” to watch the console and found that it was timing out on the floppy drive, which I don’t have. I went into the BIOS settings to let it know there wasn’t a floppy drive attached and boot time went WAY down! I let the desktop come up, but since this is an install image, changes made aren’t saved. Having the 2nd flash drive will come in very handy now! Plug it into another USB port before prceeding. Select the “System”->”Administration” menus, then the “Install Ubuntu… ” option. There are steps on the install wizard that require special mention. On step 4, select “erase and use the entire disk”, and select your flash drive (not of the hard drivces!). In step 5, after you’ve entered the required information, select “log in automatically”, which will help when running headless later. Now the most critical part, step 7 has an “advanced” button you need to click. Make sure you select the proper device, because it defaults to /dev/sda (the first hard drive). You need to select /dev/sdd, which is the last device connected (the target flash drive). Let the install proceed and you’ll have a bootable ubuntu image we can start configuring.

Remote Desktop for Administration

Once it was up, I could use the desktop and configure Remote Desktop. Having played with the default VNC server, it seemed like the wrong option. It didn’t run unless I had a monitor attached, so I did some digging and found that tightVNC is a popular alternative. There are a few steps to getting it installed and running at boot, detailed here.

For another means of access, its a good idea to install ssh (“apt-get install openssh-server”)

Configuring the RAID

The Disk Utility also has a menu option to configure the RAID. It uses mdadm, but I heard some folks talking about using lvm. Linux Mag has an article that talks about both. I decided to go with the built-in option.

Run “apt-get install mdadm” in a termal window. You can then use “Disk Utility” (on the “System”->”Administration” menu). One thing I noticed is that if you play around with RAID config or do your own partitioning of the drives, the RAID wizard isn’t really happy about using those drives. If this is the case, select each drive and then “Format Drive”. Select the “Don’t Partition” option to reset the drive state. You’ll find that you can now select the drives in the RAID setup wizard.

I’ve set the drives up in a RAID 0 config. Prior to doing this, I did a performance test on a single drive and got an average read rate of 84MB/sec. Once the RAID was configured and formatted, I ran the same performance test and got a read rate of 155MB/sec, which is approaching double the speed! Now that’s what I was hoping for!

To get the RAID started at boot time, edit the /etc/mdadm/mdadm.conf file and replace the existing “DEVICE” line with these 2 lines;

DEVICE /dev/sda1 /dev/sdb1 ARRAY /dev/md0 devices=/dev/sda1,/dev/sdb1 auto=yes

Next, run “dpkg-reconfigure mdadm” and accept the defaults. Thanks to goldfisch.at for the help.

Now, to get it mounted, add this to the /etc/fstab

/dev/md0 /mdeia/RAID ext4 rw,nosuid,nodev,uhelper=udisks 1 2

I might have been able to say “defaults” in that options column, but I took what was there when I mounted the RAID manually using the disk utility.

Sharing the Storage

Initially, I’m setting up Samba to share with my household machines. I found this article at ubuntu.com to help me. I’m concerned with privacy, not because I don’t trust my family, but because I plan on backing up my laptop and I don’t want others messing with my files.

I created a “data” directory on the RAID drive. If you right-click on that folder, select “sharing options”. It brings up a dialog, and if you click “share this folder”, you’ll get prompted to install some packages (do it!). I discovered that I needed to use “smbpasswd” to set the share password. I’ll probably need to do this for each user I create to access the RAID.

The Amazon S3 Backup

For the Amazon S3 backup part, we’ve tossed around a number of different options. S3sync isn’t bad, but doesn’t allow for threaded uploads, and there’s the issue of how often do we kick it off. We asked, “what about running an S3 based filesystem and doing a RAID 1 on top of that and the RAID 0 local drives?”. That might be OK, but how about traffic control? What block size do we use, and what penalty do we pay for a larger block size when storing small files? Where do we store the local cache? Do we even want a local cache since we have a local disk array? Along those lines, we looked at S3Backer and others.

What is the solution when you don’t really think the available options are great? Right your own! We think that we can write a daemon tied into the file system notification (pynotify) and use boto for the S3 part. Stay tuned… I smell another open source project!

I’m pretty excited about this new service from Amazon. They’ve taken a lot of the pain out of running a relational database in the cloud. Specifically, they now support managed instances running MySQL.

I’m pretty excited about this new service from Amazon. They’ve taken a lot of the pain out of running a relational database in the cloud. Specifically, they now support managed instances running MySQL.